Part 1 of a series on Voice LLMs in Production

Over the past few months, I've been building voice prototypes — from weekend experiments like a conversational tic-tac-toe game to more serious explorations: a real-time bidirectional translator and a production-oriented voice assistant. Each project started with the same assumption: voice is just text with audio. Each one broke that assumption in a different way.

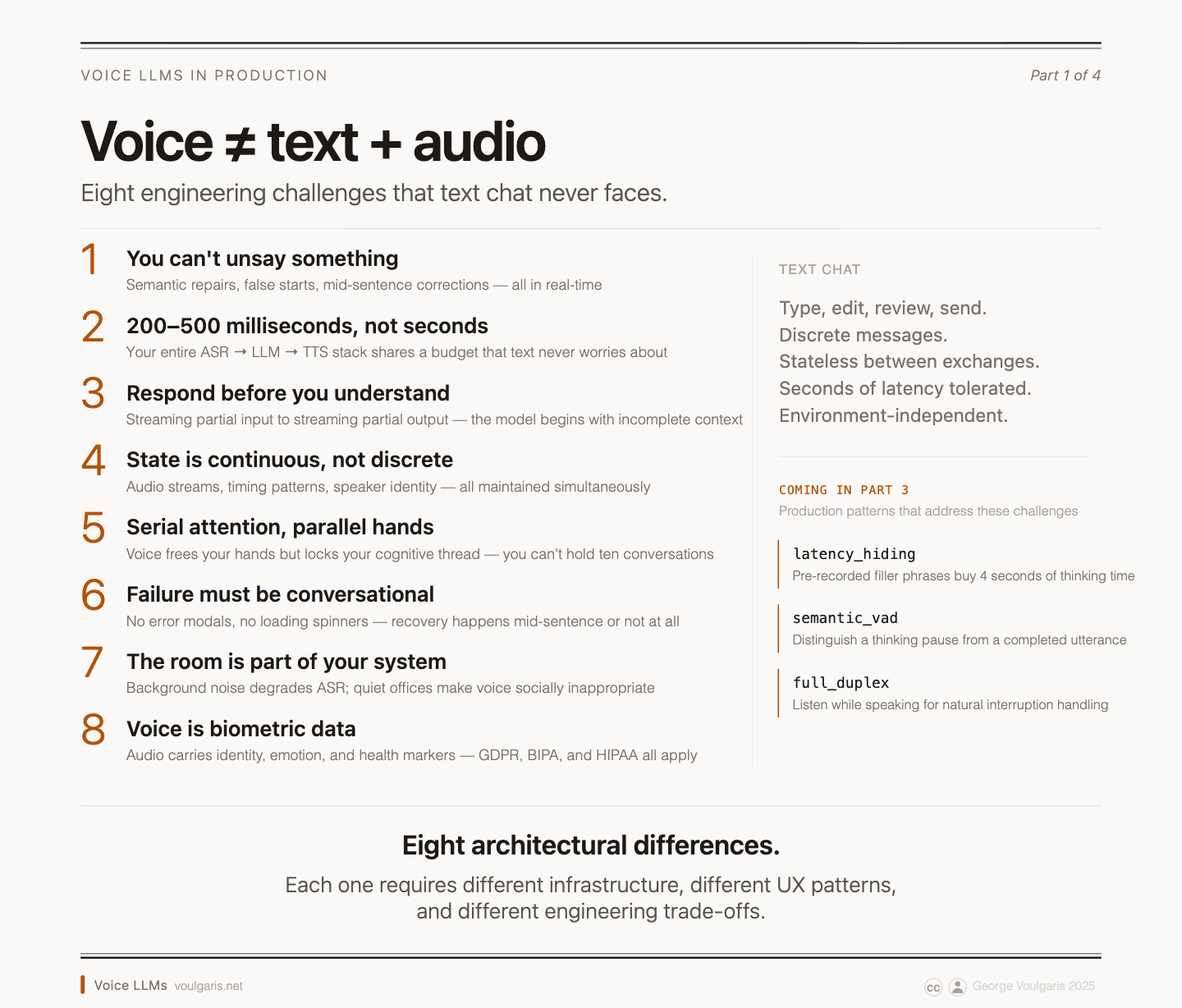

What I've learned is that voice isn't an audio layer you bolt onto a chat system. It's a fundamentally different paradigm — one that requires specialized architecture, conversation design, and user experience thinking. The assumption that voice = text + audio leads to systems that feel awkward, perform poorly, and fail to deliver on the promise of natural conversation.

This isn't just a theoretical distinction. The differences cascade through every layer of your system: from LLM optimization trade-offs to conversation state management, from infrastructure requirements to privacy implications. Understanding these differences is crucial for building voice conversational AI that actually works in production.

Error Correction Changes Everything

In text chat, users can edit their messages before sending, review conversation history, and carefully craft their responses. Voice conversation operates in real-time without the luxury of editing. Once words leave your mouth, they become part of the conversation record — complete with hesitations, false starts, and corrections.

This creates error patterns that your system must handle. Users will naturally say things like "set a reminder for... no wait, create a calendar event for tomorrow at 3pm." Your system must parse these corrections, understand the user's intent, and respond appropriately. Unlike text, where typos are the primary error mode, voice systems must handle semantic corrections, topic changes, and conversational repairs.

What a Voice LLM Actually Is

A voice LLM is not a general chat model with a speech-to-text front end and a text-to-speech back end glued on. Architecturally, it's a different kind of model, optimized for a different job: fast, streaming audio-in / audio-out transduction under a tight latency budget — not deep multi-step reasoning over a completed prompt.

Three properties distinguish voice-optimized models from general-purpose chat LLMs:

- Streaming, not batched. Voice models begin producing output while the input is still arriving, and revise as more audio comes in. A general LLM takes a complete prompt and emits a complete response. That difference drives a lot of downstream architecture: your business logic has to handle provisional results that may change.

- Latency as a first-class constraint. Conversational speech tolerates maybe a few hundred milliseconds of delay before the interaction feels broken. Voice models trade away some reasoning depth to hit that budget. A general LLM that thinks for three seconds is fine in chat and unusable on a call.

- Turn-taking and prosody, not just tokens. Voice models decide when the user has stopped talking, when it's appropriate to speak, and how to modulate tone and pacing. None of this exists in a text model.

But these architectural properties also set a ceiling: a voice model optimized for speed and streaming still relies on its language model to resolve ambiguous audio. When it has never been trained on your domain's vocabulary — drug names, legal terms, company jargon — it will guess wrong. That gap between general and domain-specific performance is the subject of the next section.

These optimization differences cascade through your system architecture. Voice systems must handle streaming, partial, and provisional responses. The business logic must account for the fact that early parts of the conversation might be revised as more audio is processed. This requires different error handling, state management, and user feedback patterns than text-based systems.

Accuracy and Domain Specialization

Even the best general voice model hits a ceiling the moment your conversation moves into specialized territory. The problem isn't model size — it's that the recognizer has never seen your vocabulary, your accents, your microphones, or your conversational patterns often enough to do better than guess. The numbers bear this out: error rates on specialized vocabulary can jump to 10–20% with an untuned model, while domain-specific models cut entity-level errors roughly in half [1]. Specialization is what closes that gap.

Concretely, specializing a voice system means some combination of:

- Custom vocabulary and biasing. Telling the recognizer that words like bupropion, Antigravity, or Voulgaris are in-distribution and should be preferred over their more common near-homophones. Without this, proper nouns and jargon get mangled on first contact.

- Acoustic adaptation to accents, noise profiles, and microphones. The same model transcribes a Pixel phone in a moving car very differently than a conference-room mic in a quiet office. Tuning on representative audio closes that gap.

- Domain-tuned language models. A legal-dictation language model predicts "habeas corpus" where a general model predicts "hey this corpus." The acoustic signal is ambiguous; the language model is what resolves it.

- Per-user personalization. The same acoustic model gets better at your voice over time.

- Turn-taking tuning. Customer-support calls have very different pause structures than casual conversation; a model tuned on support audio handles interruptions and handoffs more naturally.

Why bother? Because the accuracy ceiling is what separates "fun to try" from "relied on daily." A recognizer that hears "Sumatriptan" as "sue Matt rip ten" every fifth call is a pharmacy assistant you can't ship. A voicemail transcriber that loses the caller's name half the time is a feature that erodes trust. A meeting-notes product that can't spell your CEO's name is a demo, not a tool.

The pattern shows up clearly in consumer voice products. Apple's on-device Siri adapts to your voice, contacts, and apps over time [2] — that's not a different base model, it's specialization applied per user. Amazon Alexa's accuracy on music, smart-home, and shopping commands is materially higher than on open-ended questions, because the recognizer is biased toward each skill's vocabulary [3]. YouTube's auto-captions have gotten better language by language as Google trained per-language models [4] rather than relying on one global one. OpenAI's Whisper — the model much of the consumer ecosystem is built on — shows double-digit accuracy swings [5] between generic speech and conversational audio with domain-specific terminology.

The underlying principle is consistent: voice accuracy is a domain problem, not just a model problem. General models plateau. Specialization — on vocabulary, on conversational patterns, on the user's own voice and context — is what gets you from a demo to something people will use every day.

Environmental Constraints That Text Doesn't Face

Voice interfaces face inherent environmental limitations that text interfaces simply don't have. In noisy surroundings, speech recognition accuracy drops significantly — even with high-quality headphones. In quiet settings like libraries or open offices, speaking aloud can be socially inappropriate or disruptive.

These constraints mean voice interfaces cannot universally replace text interfaces. Users require seamless transitions between voice and text based on their environment. Your system must detect these contextual changes and adapt accordingly, maintaining conversational state across different interaction modalities.

While many envision voice interaction like a sci-fi AI companion, the reality demands careful consideration of how voice integrates into the broader user experience. Features like captions when audio is off, keyboard input when speaking isn't possible, the voice agent's ability to highlight on-screen areas, and the user's ability to tap the screen as part of a conversation (it's simpler to say "fix this" than to describe "this") are crucial. Such fallback mechanisms also help manage privacy, since hands-free voice computing means your conversations can be overheard — intentionally or not.

Voice Data Is More Sensitive Than Text

Voice data is inherently more sensitive than text data. Audio contains biometric information, emotional context, and environmental details that text doesn't capture. This creates different privacy requirements and regulatory compliance challenges.

The sensitivity of voice data affects architectural decisions. End-to-end encryption becomes more critical, local processing becomes more attractive despite computational constraints, and data retention policies require fundamentally different approaches than text-based systems. With increasing regulatory attention on voice as biometric data — from GDPR to BIPA to emerging AI disclosure requirements — this is a concern that's becoming more urgent, not less.

The Parallel vs. Serial Paradox

Voice conversation introduces an interesting paradox around parallel processing. Theoretically, voice enables parallel interaction — you can talk while doing other tasks, unlike text which requires visual attention and typing. This suggests voice could enable more efficient multitasking.

However, voice conversation is inherently serial in ways that visual interfaces are not. While you can have ten browser tabs open and switch between them instantly, you cannot maintain ten simultaneous voice conversations without explicitly re-establishing context for each one. The cognitive load of managing multiple conversation threads exceeds human capacity in ways that multiple visual interfaces do not.

Ideally, the voice agent would assist in managing multiple threads, working alongside a visual UI. It could leverage its ability to track time and automatically identify connections across different conversations — enabling a new generation of intelligent assistants that go beyond simple command-response patterns.

Voice + Screen, Not Voice vs. Screen

Rather than voice replacing screen interfaces, the more interesting paradigm is voice using the screen as a complementary input and output device. Voice-first interactions can leverage visual feedback: "Please point to the red area on the diagram" or "Here are the search results — notice that the ABC metric is lower than expected."

This creates new interaction patterns that require coordination between voice and visual interfaces. The system must maintain context across both modalities, handle timing synchronization between speech and visual updates, and manage user attention across different interface elements. The future of voice isn't voice-only — it's voice as the primary interaction layer in a multimodal experience.

Voice Design Is a Distinct UX Discipline

Voice conversation requires design considerations that don't exist in visual interfaces. Tone, pacing, prosody, and emotional context become primary design elements rather than secondary considerations. Conversational hierarchy becomes more important than visual hierarchy.

This means voice design is emerging as a distinct UX discipline requiring specialized skills and design patterns. Traditional UX designers must learn conversational design principles, while conversation designers must understand the technical constraints of voice systems. If your team is building voice experiences with only visual interface designers, you're likely missing critical aspects of the conversational experience.

Building production-ready voice AI necessitates a fundamental shift in architectural and operational thinking, moving beyond text-based paradigms to embrace real-time processing, sophisticated conversation control, and specialized resource management.

What's Next

This is the first post in a series on building voice LLMs for production. In upcoming posts, I'll cover:

- Voice pipeline architectures — a progression from sequential processing to full bidirectional real-time, with guidance on choosing the right level for your use case

- The engineering challenges nobody warns you about — latency budgets, WebRTC gotchas, interruption handling, and why your infrastructure assumptions from text chat will fail you

- Voice AI's unique attack surface — security threats that don't exist in text-based systems, from prompt injection via speech to deepfake voice impersonation

Notes

[1] AssemblyAI, "How Accurate is Speech-to-Text?" — assemblyai.com/blog/how-accurate-speech-to-text

[2] Apple, "Bring your app to Siri," WWDC 2024 — developer.apple.com/videos/play/wwdc2024/10133

[3] Amazon, "Enhance Speech Recognition of Your Alexa Skills with Phrase Slots" — developer.amazon.com/alexa/blog

[4] Google Research, "Automatic Captioning in YouTube" — research.google/blog/automatic-captioning-in-youtube

[5] Radford et al., "Robust Speech Recognition via Large-Scale Weak Supervision" (Whisper paper) — cdn.openai.com/papers/whisper.pdf

— George · voulgaris.net